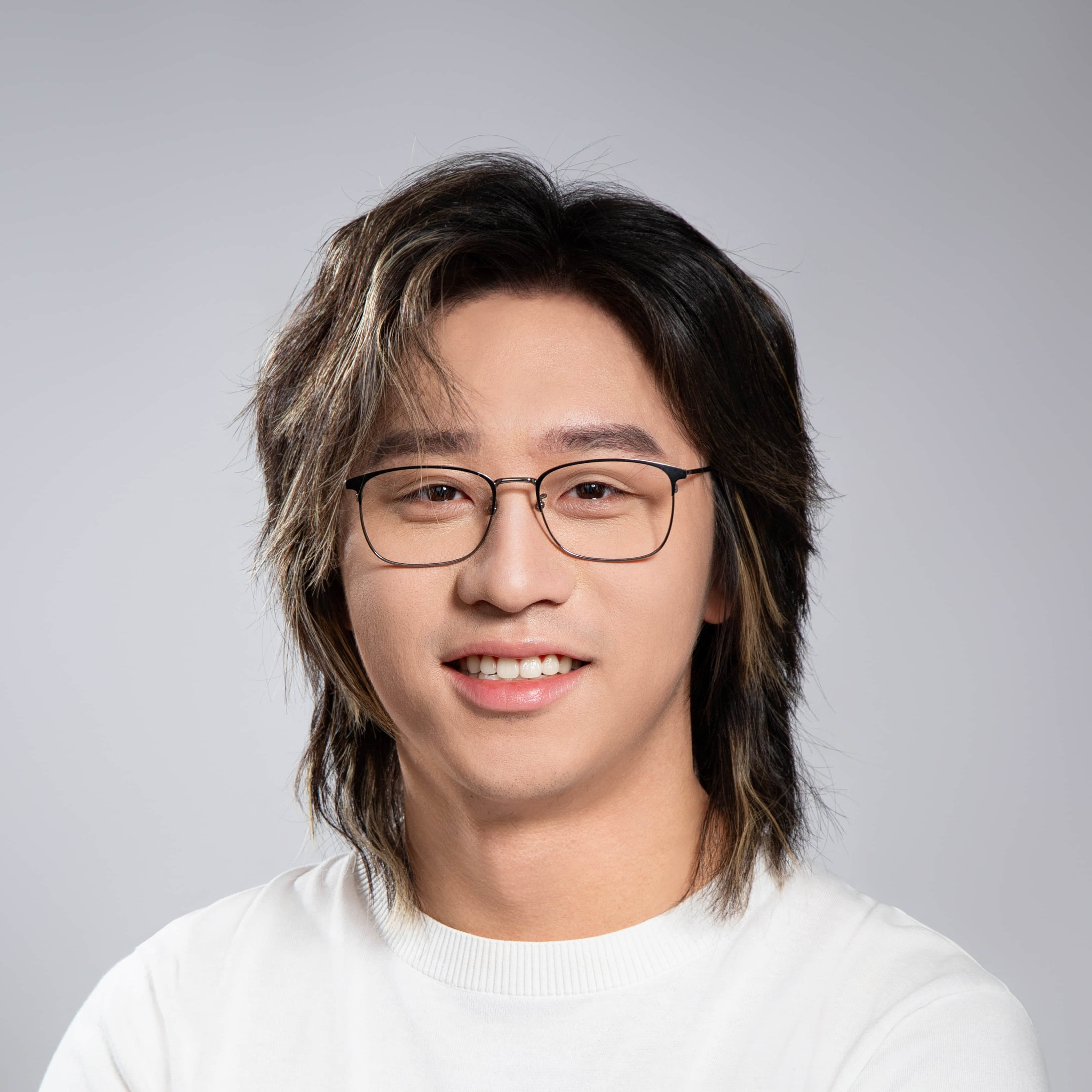

Hello, this is

Zhangchen Xu (徐张晨).

Bio

I’m currently on leave from my PhD at the University of Washington, where I am advised by Prof. Radha Poovendran. I am building a new startup 🍞 as the foundations of ASI.

We’re hiring researchers and engineers. If you want to work with me, contact: [my first name] dot [my last name] [at] outlook [dot] com

Research Interests

I work on Generative AI, with a current focus on the evaluation, synthetic data generation, post-training, and safety of large language models (LLMs). My current research directions include:

Model Evaluation

A few public evaluations & benchmarks led by me:

- AutoLab is an open benchmark for evaluating AI agents on frontier research tasks across system optimization and ML development.

- VAB is an open benchmark for evaluating how well frontier AI models judge visual artistic quality.

Synthetic Data Generation

I conduct data-centric research focused on enhancing LLMs with synthetic data.

- 🐦 Magpie [ICLR’25] is a family of SOTA synthetic datasets for LLM alignment -> Huggingface SmolLM, LLaMA-MoE, LLaVA-OneVision, Alibaba VideoLLaMA, DeepSeek-VL, and Skywork-Reward.

- 🐱 KodCode [ACL’25] is the largest fully-synthetic open-source dataset providing verifiable solutions and tests for LLM coding -> Kimi K2.

- 🦁 VisualSphinx is a synthetic open-source dataset for visual logic reasoning.

- 🦤 Toucan is the largest open-source tool-agentic dataset for post-training -> MiroThinker.

LLM Post-Training

- Model distillation from powerful LLMs to smaller models. My analysis papers in this topic include:

- Larger Models’ Paradox [NAACL’25] examines the choices of response generators for LLM alignment.

- Small Model Learnability Gap [ACL’25] investigates how to let small models (≤3B parameters) benefit from long chain-of-thought (CoT) reasoning via distillation.

- Reinforcement Learning for enhanced reasoning ability. My papers in this topic include:

- TinyV investigates the impact of false negatives in reinforcement learning with Verifiable Reward (RLVR).

- Temporal Sampling [ACL’26] examines the phenomenon of Temporal Forgetting during LLM post-training.

LLM Safety

I investigate emerging threats in LLMs (e.g., Artprompt [ACL’24], ChatBug [AAAI’25], SafeChain [ACL’25]), and explore inference-time defenses (e.g., SafeDecoding [ACL’24], CleanGen [EMNLP’24], Shield [AsiaCCS’24]).

Distributed Algorithms

I have also been working on distributed algorithms during my undergrad & early PhD.

Federated Learning. Work includes ACE [Usenix’24] (contribution evaluation attack) and Brave [AsiaCCS’24].

Distributed Consensus. Work includes Voting Validity [IPDPS’23], Wireless Distributed Consensus [TVT], and Distributed Consensus Network.

Selected Work (see here for full publication list)

KodCode: A Diverse, Challenging, and Verifiable Synthetic Dataset for Coding

Zhangchen Xu, Yang Liu, Yueqin Yin, Mingyuan Zhou, Radha Poovendran

ACL 2025 (Findings) | Paper / Website / Huggingface / Code

🏆 Best Paper Award at DataWorld @ ICML 2025!